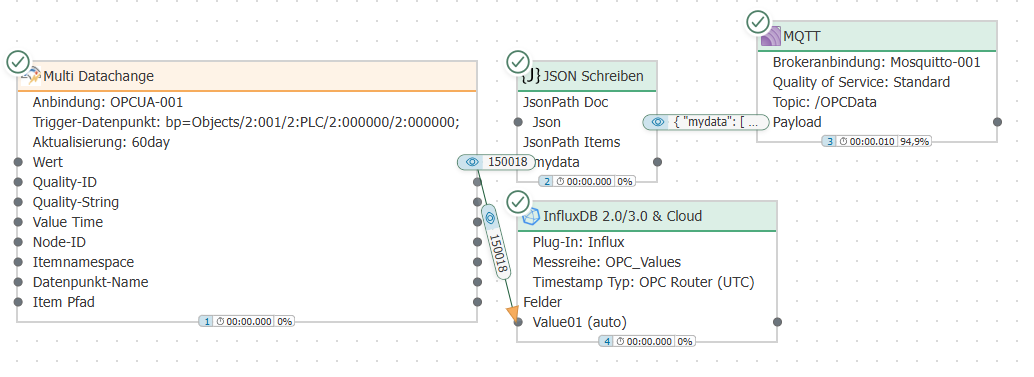

OPC data transfer via multi-datachange trigger

In our performance benchmark, we were able to simultaneously transfer around 28,500 OPC data points per second to MQTT and InfluxDB. The transfer rate was limited by the processing capacity of the OPC server, which became the limiting factor under higher loads.

Systems

OS: Windows Server 2019 Standard 1809

RAM: 16GB

Processor: 64-bit 2.6GHz (4 cores)

OPC Router: 5.3.5008.157

OS: Windows Server 2019 Standard 1809

RAM: 12GB

Processor: 64-bit 2.6GHz (4 cores)

KEPServer: V6.16.203.0

OS: Windows 10 Pro N 22H2

RAM: 12GB

Processor: 64-bit 2.6GHz (4 cores)

KEPServer: V6.16.203.0

Projects

2 KEPServerEx projects

- Each with one channel (simulator)

- Each with one device (16-bit device)

- Each with two tag groups

- Each with 40 sub-tag groups

- Each with 200 tags (random 1000)

Total: 32,000 tags

OPC Router project

- Four OPC UA client connections (2 plug-ins per KEPServerEx)

- Each plug-in is used in 40 template instances in the multi-datachange trigger

- Each multi-datachange trigger monitors a tag group (with 200 tags each)

- The values read when the triggers are activated are written to Influx and via MQTT

Summary: Monitoring and reading of 8,000 tags

OPC UA client connection

Deviations from default:

- Subscription – Register OPC tags at startup: true

- Advanced – Sample rate (ms): 1000

Multi-datachange trigger

Deviations from default:

- Update items: false

## Evaluation

## Evaluation

The average time interval between the executions of the respective multi-datachange triggers was considered.

| Connections | Data points | Plug-ins | OPC server | Ø Trigger interval | Plug-in |

|---|---|---|---|---|---|

| 160 | 32000 | 4 | 2 | 1100ms | KEPServerEx 1 Connection #1 |

| 160 | 32000 | 4 | 2 | 1027ms | KEPServerEx 1 Connection #2 |

| 160 | 32000 | 4 | 2 | 1204ms | KEPServerEx 2 Connection #1 |

| 160 | 32000 | 4 | 2 | 1101ms | KEPServerEx 2 Connection #2 |

Project files

Download Benchmark_Multidatachange.rpe